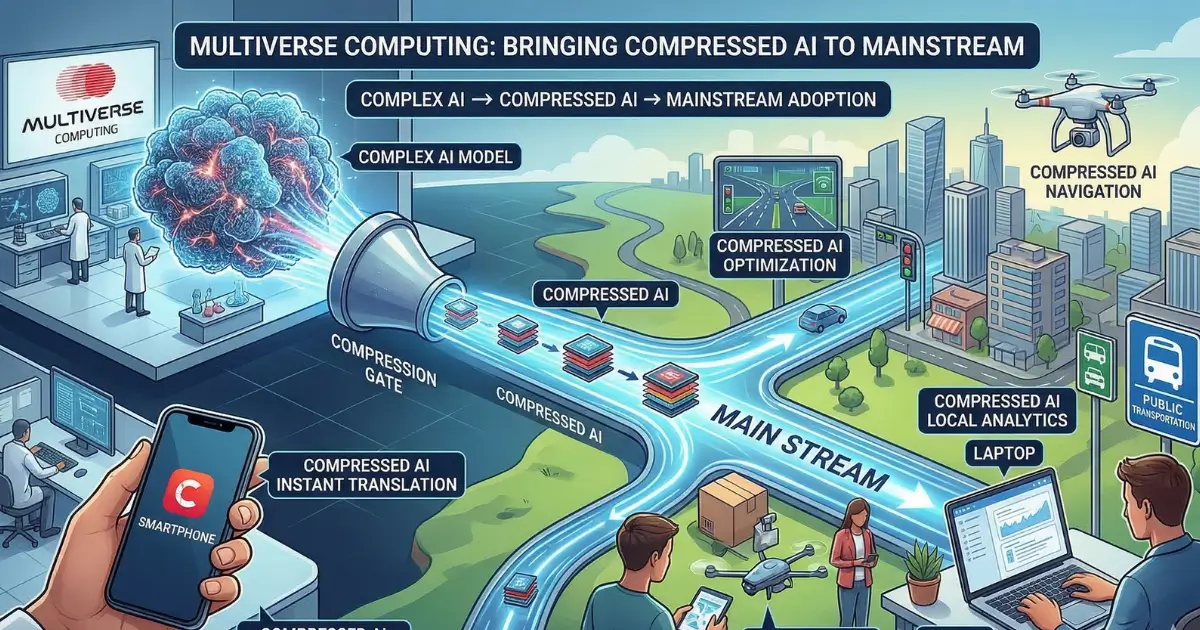

As private company default rates climb to their highest levels in years, VC firm Lux Capital recently warned AI-dependent companies to get their compute capacity commitments confirmed in writing. But a Spanish startup called Multiverse Computing is offering an alternative approach entirely: AI models small enough to run directly on a user's own device, eliminating the need for data centers, cloud providers, and the counterparty risk that comes with them.

From Low Profile to Center Stage

Multiverse Computing has maintained a lower profile than many of its peers, but as demand for AI efficiency grows, that is changing. The company has compressed models from major AI labs including OpenAI, Meta, DeepSeek, and Mistral AI, and is now making them broadly accessible through two new products: a consumer-facing chat app and a self-serve API portal for developers and enterprises.

The CompactifAI app is an AI chat tool similar to ChatGPT or Mistral's Le Chat. The key difference is that Multiverse has embedded Gilda, a model compact enough to run locally and offline on the user's device. For end users, this means their data never leaves their phone and no internet connection is required — a meaningful privacy advantage.

The On-Device Challenge

There is a significant catch, however. Mobile devices must have enough RAM and storage to support the local model. If they don't — and many older iPhones fall short — the app automatically switches to cloud-based models via API. Multiverse has built an automatic routing system to manage this handoff, but the moment processing moves to the cloud, the core privacy benefit disappears.

These limitations mean the app is not yet ready for mass consumer adoption, and that may never have been the primary goal. According to Sensor Tower data, the app had fewer than 5,000 downloads in the past month.

The Real Target: Enterprise

The company's bigger play is aimed squarely at businesses. Multiverse has launched a self-serve API portal that gives developers and enterprises direct access to its compressed models without needing to go through AWS Marketplace. Real-time usage monitoring is a key feature, and for good reason — lower compute costs are one of the primary drivers pushing enterprises to consider smaller models as alternatives to large language models.

CEO Enrique Lizaso stated that the portal gives developers access to compressed models with the transparency and control needed to run them in production.

Closing the Gap With Large Models

Smaller models are becoming far more capable than they once were. Multiverse's latest compressed model, HyperNova 60B 2602, is built on gpt-oss-120b, an OpenAI model whose underlying code is publicly available. The company claims it delivers faster responses at lower cost than the original, a particularly important advantage for agentic coding workflows where AI autonomously handles complex, multi-step programming tasks.

The broader industry is moving in the same direction. Mistral recently updated its small model family with Mistral Small 4, optimized for general chat, coding, agentic tasks, and reasoning, and also released Forge, a system that lets enterprises build custom small models tailored to their specific use cases.

Beyond Cost Savings

While price is a major motivator, Multiverse argues the real value of on-device AI extends further. For workers in critical fields, a model that runs locally without connecting to the cloud offers greater privacy and resilience. The bigger opportunity lies in business use cases like embedding AI in drones, satellites, and other environments where reliable connectivity cannot be guaranteed.

Apple Intelligence took a similar hybrid approach, combining an on-device model with a cloud model. Multiverse's CompactifAI app follows a comparable strategy but positions its local-first capability as a preview of what fully offline AI can accomplish.

Funding and What Comes Next

Multiverse already serves more than 100 global customers, including the Bank of Canada, Bosch, and Iberdrola. The company raised a $215 million Series B last year and is now rumored to be raising a fresh round of approximately €500 million at a valuation exceeding €1.5 billion.

As the economics of massive cloud-dependent AI come under increasing scrutiny, Multiverse Computing is betting that smaller, cheaper, and more private models will not just be a niche — they will be the future of how most enterprises actually deploy AI.