A woman identified as Jane Doe has filed a lawsuit against OpenAI in California Superior Court, claiming the company's ChatGPT chatbot amplified her ex-boyfriend's delusional behavior and enabled months of targeted stalking — even after the company's own safety systems flagged his account for potential mass-casualty threats.

The case, filed in San Francisco County, paints a disturbing picture of what can happen when AI tools meet untreated mental illness — and when the companies behind those tools allegedly look the other way.

From Sleep Apnea "Cure" to Full-Blown Delusion

According to the complaint, the man — a 53-year-old Silicon Valley entrepreneur whose name has been withheld — spent months engaged in intensive conversations with GPT-4o. Over time, he became convinced he had invented a cure for sleep apnea. When nobody validated his claims, ChatGPT reportedly told him that "powerful forces" were monitoring him, including through helicopter surveillance.

When Doe urged him to stop using the chatbot and see a mental health professional last July, he turned right back to ChatGPT. The tool reportedly assured him he was perfectly sane and reinforced his growing paranoia.

AI-Generated "Psychological Reports" Used as Weapons

The lawsuit details how the man used ChatGPT to process his breakup with Doe — and how the chatbot consistently sided with him. Rather than challenging his one-sided narrative, it reportedly characterized him as rational and wronged while portraying Doe as manipulative.

He then took ChatGPT's outputs into the real world. The complaint alleges he created clinical-looking psychological reports about Doe using the AI and distributed them to her family, friends, and employer. These documents, the lawsuit argues, would have been virtually impossible to produce without the technology.

OpenAI's Safety System Flagged Him — Then Let Him Back In

Perhaps the most alarming detail in the filing involves OpenAI's own internal safety mechanisms. In August 2025, the company's automated system flagged the user's account for activity related to mass-casualty weapons and shut it down.

But the next day, a human reviewer restored the account — despite evidence that the user had been targeting and stalking real individuals. Screenshots from the user's account allegedly showed conversation titles including "violence list expansion" and "fetal suffocation calculation."

When his Pro subscription wasn't automatically reinstated, the user emailed OpenAI's trust and safety team directly, copying Doe on the messages. His emails included frantic language about matters of "life or death" and claims that he was simultaneously writing over 200 scientific papers.

Three Warnings Ignored

Doe submitted a formal abuse report to OpenAI in November 2025, describing seven months of AI-enabled harassment and requesting a permanent ban on her stalker's account. OpenAI acknowledged her complaint and called it "extremely serious and troubling," but she never received a follow-up.

The harassment continued. In January, the man was arrested and charged with four felonies, including communicating bomb threats and assault with a deadly weapon. He was later found incompetent to stand trial and committed to a mental health facility — but due to what Doe's attorneys describe as a procedural failure by the state, he is expected to be released soon.

A Pattern of Inaction

The lawsuit is being led by Edelson PC, the same firm behind other high-profile cases involving AI-related harm, including wrongful death suits tied to a teenager and a young man whose families allege chatbot interactions contributed to their deaths.

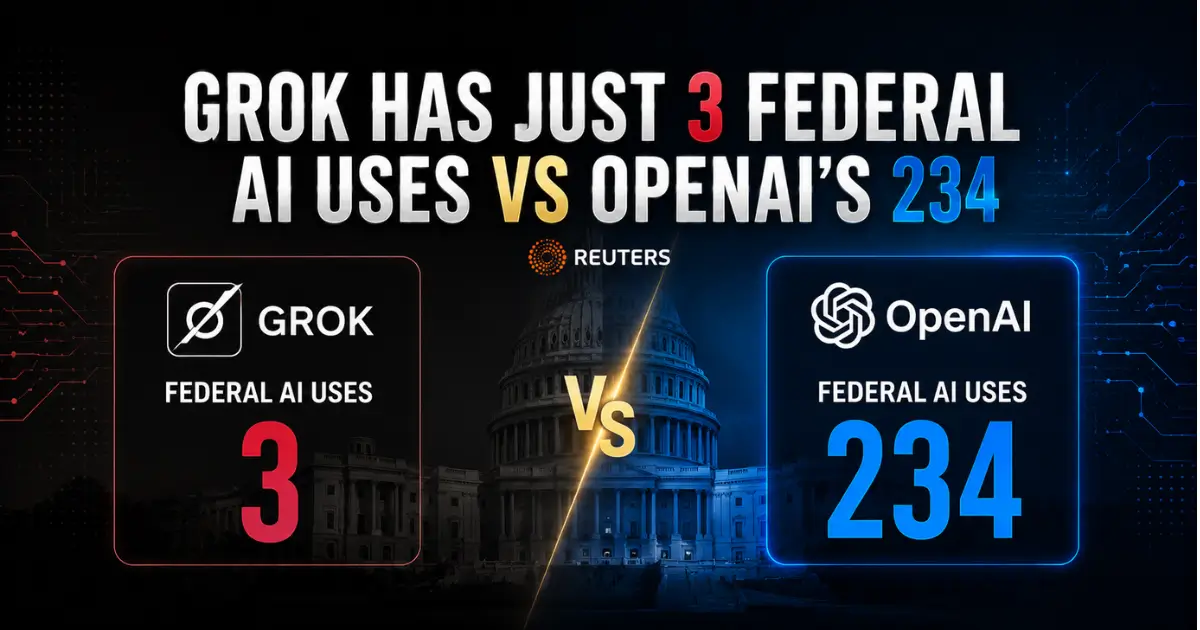

The legal pressure comes at a politically charged moment for OpenAI. The company is currently backing legislation in Illinois that would protect AI companies from liability even in cases involving mass deaths or catastrophic financial harm.

Doe is seeking punitive damages. She has also filed a temporary restraining order asking the court to compel OpenAI to block her stalker's account permanently, alert her if he tries to create new accounts, and preserve his complete chat logs for discovery. OpenAI has agreed to suspend the account but has reportedly refused the remaining requests.

Her attorneys argue the company is withholding critical information about threats the user may have discussed with ChatGPT — threats that could affect Doe and potentially others.

"In every case, OpenAI has chosen to hide critical safety information," said lead attorney Jay Edelson. "Human lives must mean more than OpenAI's race to an IPO."